There’s a story that keeps showing up in different forms across industries right now, and it goes something like this: a company deploys an AI tool, the AI makes a confident mistake, someone suffers a real loss, and then the question of who actually pays for that loss falls into a legal grey zone that nobody — not the company, not the insurer, not the regulator — was quite prepared for. In 2026, that story is no longer hypothetical. It’s playing out in courtrooms, insurance claims departments, boardrooms, and legislative chambers simultaneously, and the financial stakes are climbing by the quarter.

So let’s start where most people don’t: what AI liability actually means, why your existing business insurance probably doesn’t cover it, and what both businesses and consumers should be demanding right now before the next expensive algorithm error lands on someone’s doorstep.

What AI Liability Actually Means (And Why It’s Messier Than It Sounds)

The first thing worth understanding is that AI systems cannot be sued. AI systems have no legal personhood, so when an AI causes harm, liability flows to the humans and organizations that created, deployed, or relied upon that system. That sounds simple enough on paper. In practice, it creates a multi-layered accountability problem, because the chain from algorithm to outcome can involve a model developer, a business that licensed the tool, a third-party API, a human employee who approved the output, and a client who relied on it — all in the span of one transaction. NAIJASABI

The legal term that keeps appearing in court filings and insurance policies is “AI liability,” which refers to legal responsibility when AI systems cause harm, produce errors, or generate discriminatory outcomes. But the working definition of that phrase is still being constructed in real time. According to the Swiss Re Institute’s 2024 Sonar report, the insurance industry faces a critical challenge with “silent AI” — risk exposures within traditional policies that were not underwritten for algorithmic failure. In other words, your business may already have AI exposure that your insurer hasn’t priced in and may not even know exists yet. NAIJASABI

The businesses most exposed aren’t necessarily the tech companies building the models — they’re the law firms, hospitals, financial advisors, marketers, and lenders who have plugged AI into their workflows and assumed the outputs are their team’s professional judgment. They’re not. And when those outputs cause harm, the liability question gets very complicated very fast. For businesses navigating fintech-specific AI risks, understanding how fintech regulation is evolving in Nigeria and globally is a useful starting point for context on the regulatory pressures bearing down on AI-powered financial services.

Chatbot Errors and the Business Losses Nobody Talks About

You may have heard of the Google Bard incident. During a live product demonstration, Google’s AI chatbot confidently stated that the James Webb Space Telescope had captured the first-ever image of an exoplanet. That claim was completely fabricated — a different telescope had taken the first such image years earlier. Alphabet, Google’s parent company, lost roughly $100 billion in market capitalisation as its stock fell approximately 8 to 9 percent immediately following the demonstration. One hallucination. One hundred billion dollars. Central Bank of Nigeria

That was dramatic and public, but the quieter losses are happening at far smaller scales every single day. An airline’s chatbot provided incorrect policy information to customers, leading to legal consequences when the truth emerged. The company had to disable the bot entirely, suffering customer trust erosion and confidence damage. Critically, the defence that the AI itself made the mistake proved ineffective both in court and in public opinion — consumers and judges held the company responsible for its AI agent’s statements. Central Bank of Nigeria

Then there are the legal sector disasters. A federal judge ordered two attorneys representing MyPillow CEO Mike Lindell to pay $3,000 each after they used artificial intelligence to prepare a court filing filled with more than two dozen mistakes — including hallucinated cases, meaning fake cases entirely invented by AI tools. That was a sanction. Imagine a client who loses a case because their lawyers submitted fabricated precedent. The downstream liability from that error could be catastrophic, and no standard legal malpractice policy was designed to account for it. MyJobMag

Depending on the particular AI coding, algorithm, and inputs, AI-produced outputs can be inconsistent, flawed, biased, or outright incorrect. A generative AI model can produce a correct result with one prompt, then generate a completely different and incorrect response to a highly similar question — and will often present both responses with equal confidence, making it difficult for users to distinguish reliable from unreliable information. When that inconsistency is deployed in a public-facing, high-stakes setting — a customer service bot, a legal research tool, a financial advisory platform — the exposure is real and ongoing. Jollofplus

Autonomous Decision-Making: The Risk That Scales

Individual chatbot errors are embarrassing and expensive. But autonomous AI decision-making — where systems are making consequential choices without meaningful human review — is where liability exposure starts scaling in ways that traditional risk frameworks genuinely weren’t designed for.

Medicare Advantage plans have been named in federal lawsuits alleging that AI systems used to automate prior authorisation decisions led to the denial of medically necessary care. These cases are beginning to test the liability boundaries of insurers themselves when AI is placed at the heart of benefit determinations. One insurer. One AI model. Hundreds of thousands of denied claims — each one a potential lawsuit. Stakblog

In early 2025, major health insurers Cigna, Humana, and UnitedHealth Group were all hit with lawsuits for allegedly using AI to wrongfully deny medical claims. One filing cited an internal process where an algorithm reviewed and rejected over 300,000 claims in just two months. The scale of autonomous decision-making is precisely what makes it so dangerous from a liability standpoint: when a human advisor makes a mistake, the error affects one client. When an AI model makes the same mistake, it affects every client in the dataset simultaneously. Central Bank of Nigeria

When an AI recommendation engine provides faulty suggestions that cost a client millions in lost revenue, or a predictive analytics platform fails to identify critical business risks, the resulting professional liability claims can be substantial. This already occurred with Workday in the first half of 2025. The Workday case is particularly instructive because it signals that liability for autonomous AI systems is no longer theoretical — it’s arriving in settled cases and court judgments, not just academic papers. NAIJASABI

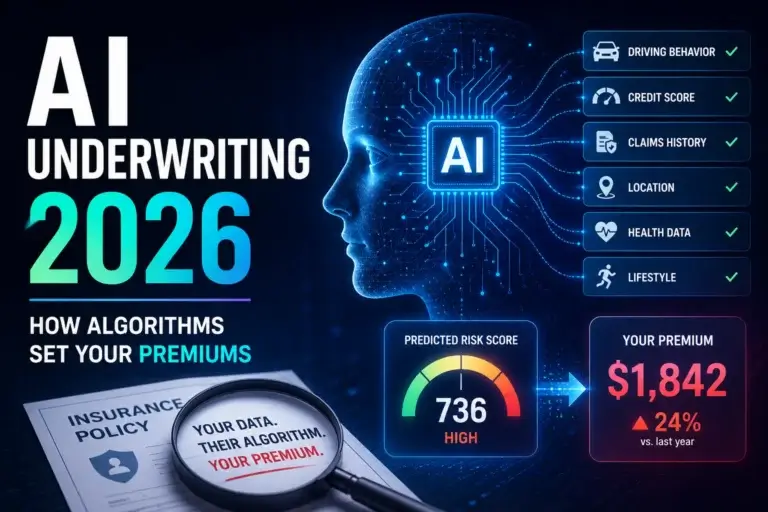

This is directly relevant for anyone engaged in AI underwriting, where models are increasingly being used to make or heavily influence coverage decisions. The liability exposure flows both ways: the insurer using AI to deny claims, and the businesses whose AI-generated decisions result in harm to third parties.

The Insurance Gap Nobody Warned You About

Here’s the part that should alarm every business leader who has integrated AI into core operations: your existing coverage almost certainly has gaps you haven’t found yet.

Traditional liability coverage often excludes the kinds of mistakes AI systems make, especially when legacy systems complicate coverage, leaving many organisations exposed unless they secure professional liability insurance — commonly called errors and omissions (E&O) insurance. But even E&O policies aren’t consistent on this point. The market right now is fragmented, contradictory, and moving fast. BANKIBUSINESS

AXA recently released an endorsement for its cyber policies that directly addresses risks associated with generative AI, stipulating coverage for a “machine learning wrongful act.” Coalition expanded its cyber insurance definitions to include an “AI security event” and deepened coverage for funds transfer fraud to include deepfake-enabled instructions. In contrast, some insurers are moving in the opposite direction — a Berkley-drafted exclusion would bar coverage for a broad array of artificial intelligence claims across D&O, E&O, and fiduciary liability policies. Jollofplus

So depending on who your insurer is and when your policy was written, you might have expanding AI coverage, explicit AI exclusions, or nothing at all addressing the question. Corgi, a new insurance company backed by Y Combinator, is now offering dedicated AI liability insurance — covering both the AI companies providing outputs and the businesses using those tools. Their product explicitly covers three AI risk categories: model performance and hallucination, algorithmic bias, and training data disputes. It’s one of the first signs that a genuinely specialised AI insurance market is starting to emerge, but the products are nascent and the pricing is still being figured out. Piggyvest Blog

If you’re in financial services or the tech sector, this intersects heavily with cyber insurance — because many AI failures involve data breaches, model compromise, or deepfake fraud that straddles the line between AI liability and traditional cybersecurity coverage. Getting clarity on where one policy ends and another begins is urgent work that most businesses haven’t done.

The Regulatory Outlook: Laws Are Coming, Fast and Fragmented

The legislative picture in 2026 is genuinely complicated, and the word “fragmented” doesn’t quite capture how inconsistent the regulatory environment has become. At the federal level in the US, there’s still no comprehensive AI liability framework. At the state level, the picture is rapidly filling in. New York is considering legislation that would ban personalized algorithmic pricing in retail and permit plaintiffs to recover actual damages or statutory damages of no less than $5,000 per violation, plus treble damages and disgorgement. TechCabal

New York has also proposed legislation that would create specific liability for chatbot developers and deployers under certain circumstances, with several states and the federal government actively considering bills to clarify AI liability issues in 2026. Renmoney

In Europe, the framework is further along. The EU AI Act has been in force since August 2024, introducing a risk-based hierarchy from prohibited systems to high-risk applications — including credit scoring, autonomous vehicles, and medical diagnostics. For high-risk systems, full obligations apply as of August 2026. That means any business with European customers or operations is already living under a legal framework that will hold them to specific standards for exactly the kinds of autonomous decision-making covered in this article. HulkApps

Organisations demonstrating robust AI governance can obtain affirmative AI coverage at competitive premiums in 2026. Those without governance frameworks face exclusions or prohibitive pricing. The message from both regulators and insurers is increasingly the same: document your human oversight, audit your models, and be prepared to prove it. NAIJASABI

Frequently Asked Questions

Q: Who is legally responsible when an AI system causes financial harm?

Courts consistently assign liability to the organisations and professionals deploying AI, not to the AI systems themselves. Organisations cannot avoid liability by claiming “the AI did it” — courts treat AI outputs as the organisation’s outputs, and fiduciary duty requires advisors to exercise independent judgment even when using AI tools. NAIJASABI

Q: Does standard business insurance cover AI errors?

Not reliably. General liability policies may cover AI-related operational risks only where AI is deployed as part of insured business operations and not specifically excluded. However, many policies lack specific language to protect against AI misuse, and businesses should verify with their provider whether employment practices, data privacy, general liability, and other areas have adequate AI-related coverage. Medium

Q: What type of insurance should a business using AI actually carry?

If AI influences your advice or decisions, you’re accountable for the outcome — general liability won’t help. You need errors and omissions (E&O) insurance, which protects businesses providing advice, consulting, or tech services against claims of negligence, inaccurate advice, or professional mistakes. Supplementing that with a dedicated AI liability endorsement — where available — is increasingly prudent. BANKIBUSINESS

Q: Can AI vendors be held responsible instead of the businesses using their tools?

Many AI vendor contracts include indemnification clauses, but these typically exclude indirect damages like lost profits and reputational harm, cap liability at contract value (often far below actual exposure), require the customer to indemnify the vendor for how the AI is used, and disclaim fitness for a particular purpose. Vendor contracts are not a liability shield — they’re usually drafted to protect the vendor. NAIJASABI

Q: Is there actual dedicated AI insurance available yet?

Armilla — a startup backed by Lloyd’s of London — has developed a dedicated insurance product intended to cover financial losses tied to underperforming or malfunctioning AI models. Corgi, backed by Y Combinator, launched modular AI liability coverage in May 2026 specifically designed for companies using AI, machine learning, or automated decision-making — with businesses selecting modules based on their specific risk exposure, each carrying its own limit and retention. The market is early but it is moving. JollofplusPiggyvest Blog

The Bottom Line

The question in 2026 isn’t whether AI will make expensive mistakes — it’s whether your business will be holding the bill when it does. The liability is real, the insurance market is catching up slowly and unevenly, and the regulatory environment is tightening from multiple directions at once. The businesses that will navigate this period best are the ones that treat AI governance not as a compliance exercise but as a genuine risk management discipline — auditing their models, documenting human oversight, reviewing their insurance coverage line by line, and understanding that “the algorithm decided” is not a legal defence that courts are accepting.

If you rely on AI to make or influence consequential decisions for clients, the time to close those coverage gaps is not after the first lawsuit.

This article is for informational purposes only and does not constitute legal or insurance advice. Always consult a qualified professional for guidance specific to your circumstances.