Most people don’t spend much time thinking about how their insurance premium gets calculated. You fill out a form, wait a few days, and a number appears. Maybe you shop around and find something cheaper, or maybe you just pay it and move on. But the process behind that number — who or what decided it, what data was used, and whether it’s actually fair — is quietly undergoing one of the biggest transformations in financial services history. Algorithms are now making decisions that used to take trained underwriters days to produce. They’re faster, often more accurate, and increasingly powerful. They’re also capable of getting things very wrong in ways that are hard to detect and harder to challenge.

What was experimental in 2024 and promising in 2025 has become operational reality in 2026: AI Underwriting 2026, AI-driven claims processing, algorithmic underwriting, and predictive analytics are no longer competitive advantages — they’re table stakes. And yet, most policyholders have no idea this shift has happened. This piece is an attempt to change that — to pull back the curtain on how AI underwriting actually works, what it gets right, where it goes wrong, and what regulators around the world are starting to do about it.

The Predictive Analytics Engine Behind Your Quote

Traditional insurance underwriting was always about prediction, even before computers existed. An actuary would look at aggregated historical data — how often people in a certain age group got into accidents, how frequently homeowners in a particular region filed claims — and build a risk model from those patterns. Algorithms do the same thing, only at a scale and speed that makes the old approach look like arithmetic by comparison.

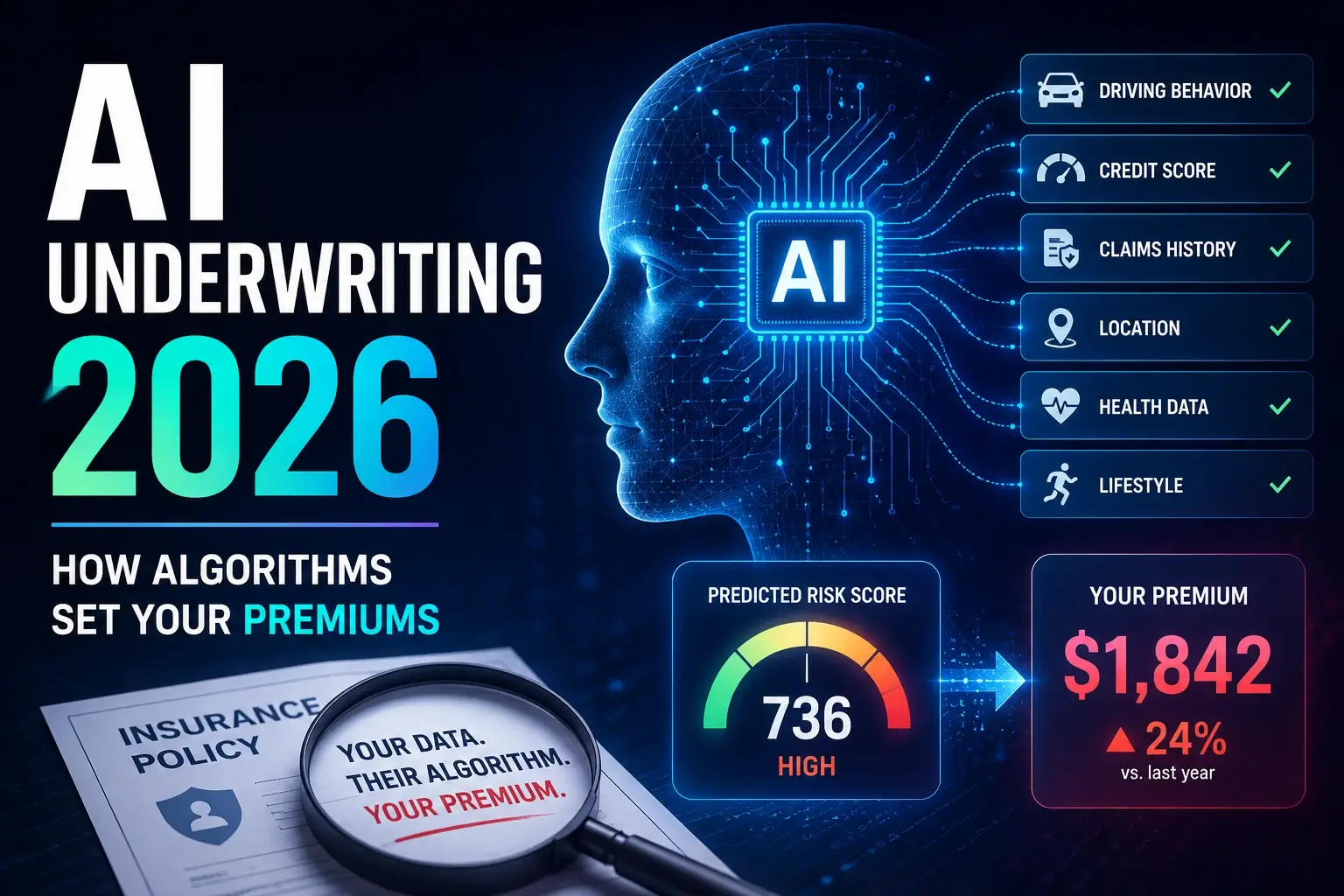

Unlike traditional underwriting, which relies on historical data and actuarial models, AI-powered predictive analytics continuously learns from vast data sources, identifying subtle risk factors that may go unnoticed by human analysts. By analysing customer demographics, financial behaviour, health records, and even environmental conditions, AI can pinpoint potential risks with greater precision. The upshot for insurers is substantial. AI reduces insurance underwriting time from three days to three minutes while improving risk assessment accuracy by 20%, enabling instant policy decisions through automated analysis of credit scores, medical records, IoT sensors, and satellite imagery.

Auto insurance has become the clearest example of this shift in action. In 2024, more than 21 million U.S. policyholders shared telematics data with their insurer — a 28% compound annual growth rate since 2018. Telematics data enables more accurate risk assessments and selection, allowing insurers to better match rates to actual risks. When a model can see how hard you brake, what time of day you drive, how often you exceed speed limits, and whether you use your phone behind the wheel, it doesn’t need to guess based on your postcode. It knows.

The same logic is spreading into health and life insurance. Wearable technology has transformed health insurance underwriting by providing real-time health data — smartwatches and fitness trackers collect biometric data including heart rate, physical activity, and sleep patterns, allowing insurers to adjust premiums dynamically. From a pure efficiency standpoint, this is genuinely impressive progress. The question — and it’s a question that gets more urgent every year — is what happens when the model is wrong, biased, or simply drawing the wrong inferences from the right data. This is where the AI liability insurance conversation becomes directly relevant to how your premium gets set.

The Bias Problem: When Algorithms Discriminate Without Intending To

Here’s the thing about algorithmic bias that most people don’t fully appreciate: a model doesn’t have to be deliberately discriminatory to produce discriminatory outcomes. It just has to learn from data that reflects existing inequalities, then apply those learnings at scale. That’s not a hypothetical risk anymore — it’s a documented pattern.

A 2025 European study of 12.4 million insurance observations found AI models overcharged the poorest communities by 5.8% for life insurance and 7.2% for health coverage above fair-benchmark pricing. The NAIC’s 2025 health insurance survey also found that nearly one-third of health insurers don’t regularly test their AI models for bias. That last statistic deserves a second read. Nearly a third of health insurers — companies whose pricing decisions directly affect whether people can afford coverage — are not routinely checking whether their models treat people fairly.

The mechanism behind this is what researchers call proxy discrimination. Only 47% of insurers have deployed predictive modelling for risk evaluation, and the models that exist often rely on proxy variables — ZIP codes, credit scores, education levels — that correlate with race and income. An algorithm that penalises you for living in a particular postcode isn’t explicitly discriminating on race. But if that postcode correlates strongly with racial demographics, the practical effect is the same — the model just has plausible deniability baked into its architecture.

The legal system is starting to catch up. All three major AI discrimination lawsuits in insurance survived motions to dismiss in 2025. Huskey v. State Farm, filed in 2022, alleges the insurer’s machine-learning fraud algorithms use racial proxies — the case is now in discovery. Insurers watching that case unfold will be well aware that surviving a motion to dismiss is not the same as winning, and discovery in a machine-learning discrimination case means handing your model architecture and training data to the opposing counsel. That prospect alone is motivating some quiet internal audits.

The cyber insurance sector faces a version of this problem too — where AI models assessing cyber risk may systematically underestimate exposure in certain industries or overweight easily-measurable variables while ignoring harder-to-quantify human factors.

How AI Pricing Models Actually Work in 2026

Step away from the ethics for a moment and look at the mechanics. AI underwriting uses machine learning, natural language processing, and anywhere from 500 to 1,500 or more variables to automate risk scoring, pricing, and approvals across insurance and lending. The scale of variables involved is part of what makes these models so powerful — and so difficult to explain.

For complex policies, AI helps reduce underwriting cycle time by 31% and improve risk assessment accuracy by 43%. It enables personalised customer experiences and innovative underwriting models such as behaviour-based dynamic pricing that can unlock up to 15% growth in revenue. Large carriers can achieve up to 30% improvement in portfolio performance and up to 3% better loss ratios through AI’s ability to factor unstructured, previously inaccessible data into risk profiles.

The market reflects this growing confidence in algorithmic underwriting. The AI in insurance market is projected to grow from $26.3 billion in 2026 to $114.52 billion by 2031, registering a compound annual growth rate of 34.20%. That’s not a rounding error — it’s a fundamental restructuring of a sector that has traditionally been among the most conservative in financial services.

One of the more consequential shifts is in embedded insurance — where coverage is bundled into purchases like car loans, electronics, or travel bookings. Analysts predict the global embedded insurance market could surpass $180 billion in gross written premium by 2026, driven by AI’s ability to contextualise risk and deliver instantaneous quotes. Crucially, the pricing happening at the point of embedded sale is often fully automated — the customer is quoted, approved, and bound in seconds, without any human underwriter ever reviewing the application. Continuous learning is central to these models — AI systems retrain on new outcomes data, improving prediction accuracy over time rather than degrading like static scorecards. For a deeper look at how this intersects with broader digital finance trends, the fintech regulation landscape provides useful context on where policy is heading.

Fairness Regulations: Who’s Setting the Rules and How Far Do They Go

The honest answer to “who’s regulating AI underwriting” in 2026 is: a lot of jurisdictions, with very different approaches, and no coherent global standard. That patchwork is creating real compliance headaches for insurers operating across borders — and leaving meaningful gaps for consumers in less regulated markets.

In the United States, the state-level picture is the most developed. California’s SB 1120, effective January 2025, prohibits health insurers from denying coverage based solely on an AI algorithm. Colorado’s SB 21-169 is the most aggressive state law on the books — it requires insurers to inventory every algorithm and external data source used in pricing, test for discriminatory outcomes, and submit annual compliance reports with attestation from a chief risk officer. The law expanded from life insurance to auto and property lines.

At the federal level in the US, the NAIC is pushing guidance rather than binding mandates, but the survey data is revealing. The NAIC’s Big Data and Artificial Intelligence Working Group completed its health insurance survey in 2025, finding that AI adoption rates are high — 92% of health insurers, 88% of auto insurers, 70% of home insurers, and 58% of life insurers report current or planned AI usage. Despite this widespread adoption, nearly one-third of health insurers still do not regularly test their models for bias or discrimination.

In Europe, the framework is more comprehensive and more binding. The EU Artificial Intelligence Act designates AI systems used in life and health insurance underwriting as high-risk systems, imposing rigorous requirements for bias testing, technical documentation, and post-deployment monitoring. Most of the EU AI Act’s rules will apply from August 2026 onwards — meaning any insurer with European exposure is now operating under an active compliance deadline, not a theoretical future obligation.

Increased focus on algorithmic transparency and bias mitigation will reward insurers prepared to meet stricter requirements. Carriers that initiated governance-first AI programmes in 2025 will be able to leverage them to win trust from customers, regulators, and partners. The insurers who ignored governance for efficiency gains are about to find out what the compliance bill looks like.

Frequently Asked Questions

Q: Can an AI algorithm legally charge you more based on your postcode or credit score?

In many jurisdictions, yes — though this is being actively contested. Bias and disparate impact are consistently among the top compliance risks in AI underwriting deployments. Machine learning models can amplify historical biases through proxy variables like ZIP code or education level — and in 2025, the Massachusetts AG secured a $2.5 million settlement against a student loan company whose AI model created disparate impact against protected classes. The legality depends on the jurisdiction and the specific variable — but regulators are increasingly treating the use of proxy discrimination as liability regardless of intent.

Q: How do you know if your premium was set by an algorithm?

In most markets, you often can’t tell directly. However, Colorado’s SB 21-169 requires insurers to inventory every algorithm and external data source used in pricing and submit annual compliance reports. As more states adopt similar requirements, the right to know whether an algorithm influenced your pricing is becoming a live policy question. You can ask your insurer directly — and increasingly, they’re required to be able to answer.

Q: Are AI underwriting decisions more accurate than human decisions?

Generally, yes — in terms of statistical risk prediction. Studies show that machine learning models have improved risk prediction accuracy by 25% compared to traditional actuarial models. AI-driven NLP extracts insights from unstructured text, reducing document processing times by up to 70%. Accuracy in prediction is not the same as fairness in outcome, however — a model can be highly accurate at predicting who will file claims while simultaneously embedding structural disadvantage for certain groups.

Q: What happens if an insurer’s AI model turns out to be discriminatory?

Courts worldwide are demanding explainability in algorithmic systems. The CFPB has made clear that there are no exceptions to federal consumer financial protection laws for new technologies, and that an institution’s decision to use AI can itself constitute a policy that produces bias under the disparate impact theory of liability. In practice, this means potential class actions, regulatory enforcement, mandatory model audits, and — in severe cases — fines and operational restrictions.

Q: Should I share telematics or wearable health data with my insurer?

This is genuinely a trade-off decision. Telematics data enables more accurate risk assessments and more sophisticated pricing, improved retention, and effective acquisition of good risks. If your driving or health behaviours are above average, sharing that data could lower your premiums meaningfully. If they’re below average, you’re effectively volunteering information that will cost you more. Read the data-sharing terms carefully, and understand that once the data is shared, how it’s used — now and in future model iterations — may not be fully transparent to you.

The Bottom Line

AI underwriting is not going away — the efficiency and pricing accuracy gains are simply too significant for the insurance industry to ignore. Predictive analytics allows insurers to forecast future risk trends and customer needs in ways that standard actuarial methods cannot, and the ultimate goal of underwriting — to charge precisely according to risk — is now becoming reality through AI-driven personalisation. But precision and fairness are not the same thing, and an industry built on pooled risk has a fundamental tension to resolve when individualisation becomes this granular.

What the next few years will determine is whether regulators, insurers, and civil society can build governance frameworks fast enough to keep pace with model deployment — and whether policyholders in less-regulated markets will be protected by the standards being set in Colorado, California, and Brussels, or left exposed by the gaps between them. Knowing how the system works is the first step to being able to navigate it.

This article is for informational purposes only and does not constitute insurance, legal, or financial advice. Always verify current policy terms and regulatory requirements with qualified professionals in your jurisdiction.